LifeJet

A care companion that heals one experiment at a time.

A care companion built specifically to apply AI to functional medicine. I built it solo across three workstreams: an agentic playbook the agent moves through at the user's pace, a product where the agent's output renders as persistent artifacts the user can return to, and the engineering decisions that keep voice and chat writing to one source of truth.

How functional medicine becomes a real product.

The founder is deeply focused on longevity and functional medicine. He was frustrated that ChatGPT couldn't organize his thinking about either: a chat thread starts cold every time, recommendations stack into long protocols, and nothing circles back to whether the last call worked.

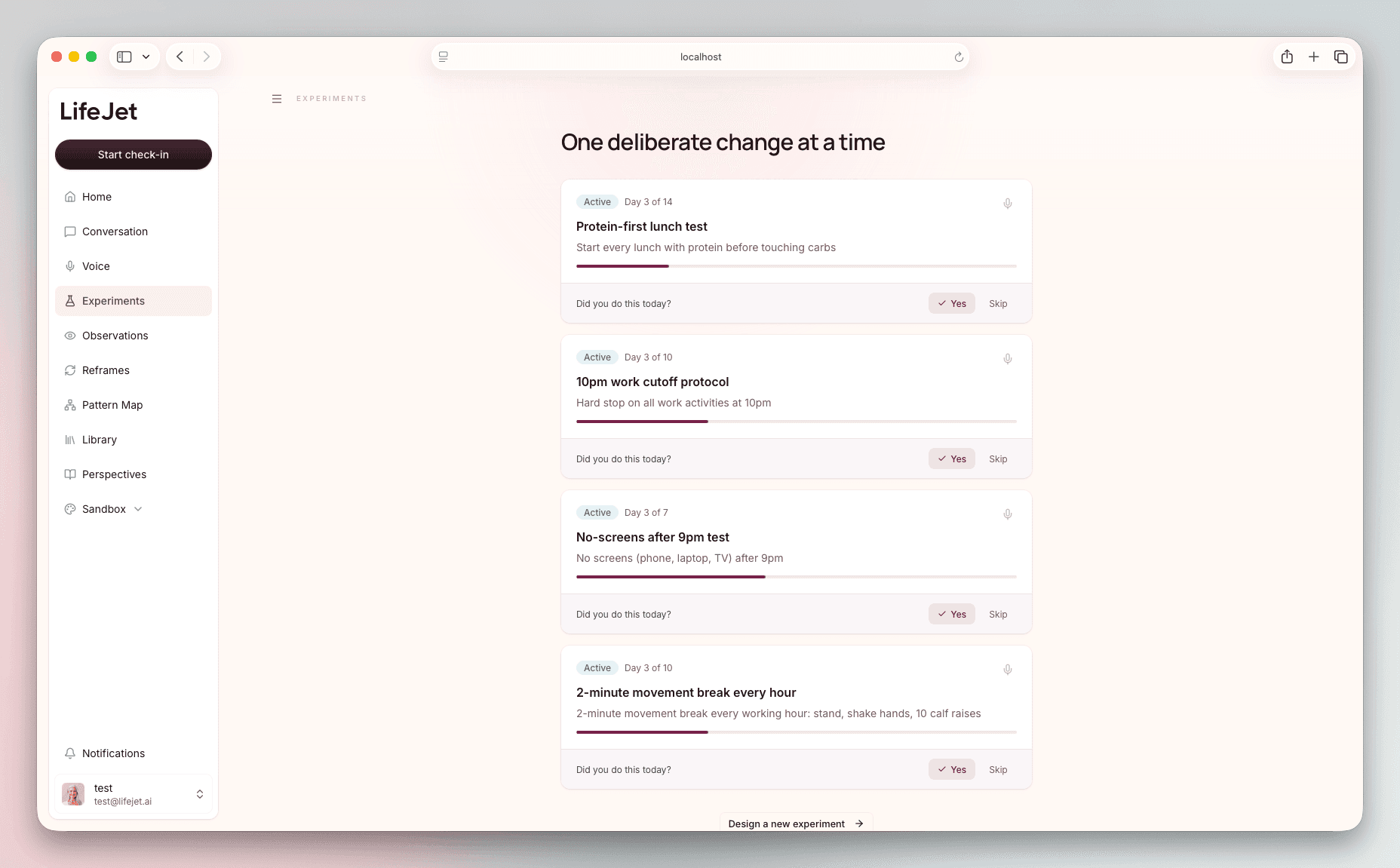

Functional medicine works the opposite way. One keystone experiment at a time, observed over a few days, then connected to the larger pattern. To translate that into a real product I needed a coaching arc the agent could move through at the user's pace, cards that turned conversation into objects worth carrying forward, a memory layer that survived between sessions, and persistent screens that made experiments, reframes, observations, and the pattern map feel like one care relationship. Multiple functional-medicine practitioners shaped the playbook alongside the build.

The build landed on three concurrent workstreams. I led all three.

A coaching arc the agent moves through.

The category fails because conversation is treated as information delivery: symptoms in, protocols out, no checkpoint where the user can see how the conversation is actually progressing. Sessions drift, and the next one starts from scratch.

I built the playbook around an arc the agent moves through at the user's pace. Mirror: validate before analyzing, pure conversation, no tools. Pattern: synthesize what's been observed into one believable explanation, with the agent capturing the structured pattern for memory through an invisible tool call. Reframe: produce alternative frames as a card the user can compare and save. Experiment: package one keystone action with a guardrail and a single tracking cue noticeable inside a few days.

The agent never announces phase transitions. It stays in a phase until the user is ready to move.

Tools the agent chooses when to fire.

The tools sit behind the phases. The deliverable ones render cards the user can act on: an Experiment plan, a set of Reframes to compare, a check-in prompt for an active experiment. The invisible ones modify saved artifacts in place when the agent has new information to record: capturing a structured pattern into memory, updating a Reframe, updating an Experiment, updating an Observation, recording a check-in. A generation-only suggestion tool emits short follow-up prompts after every response, with no card and no execute. Whether any tool fires is the agent's call.

Memory that survives the next session.

A care relationship that resets every session is just chat. To become coaching, it has to remember on its own without an intake form, without the user re-explaining themselves, and without burying the model in a transcript it has to re-parse every turn. A flat conversation log is the wrong shape: it has no retention semantics, it dilutes the signal, and it grows linearly forever.

Memory the next session can build on.

LifeJet writes memory through Mem0, with typed buckets for distinct memory categories: identity facts that don't expire, the user's current health profile and constraints (365 days), the active intervention protocol (180 days, its own queryable surface so the agent can ask "what's active" without scanning a transcript), short conversation summaries that fall off after a month, and working hypotheses (90 days) that wait for validation.

After every assistant turn (voice or text) a background job classifies the exchange and writes what's worth keeping into the right bucket. The next turn loads the relevant memory back into the system prompt as a context layer. The relationship compounds because the memory model was built for compounding.

Experiments, Reframes, Observations.

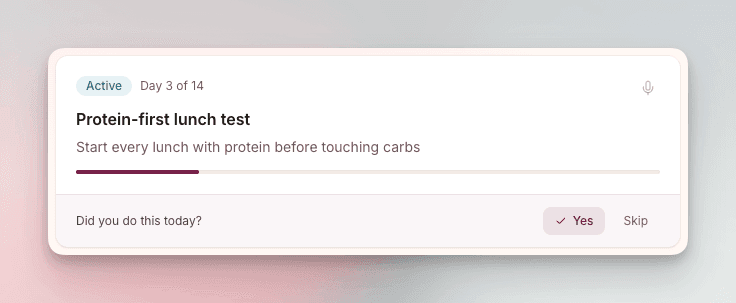

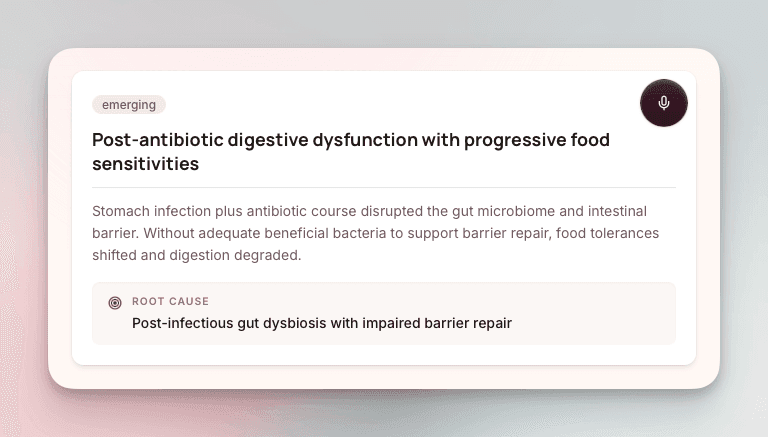

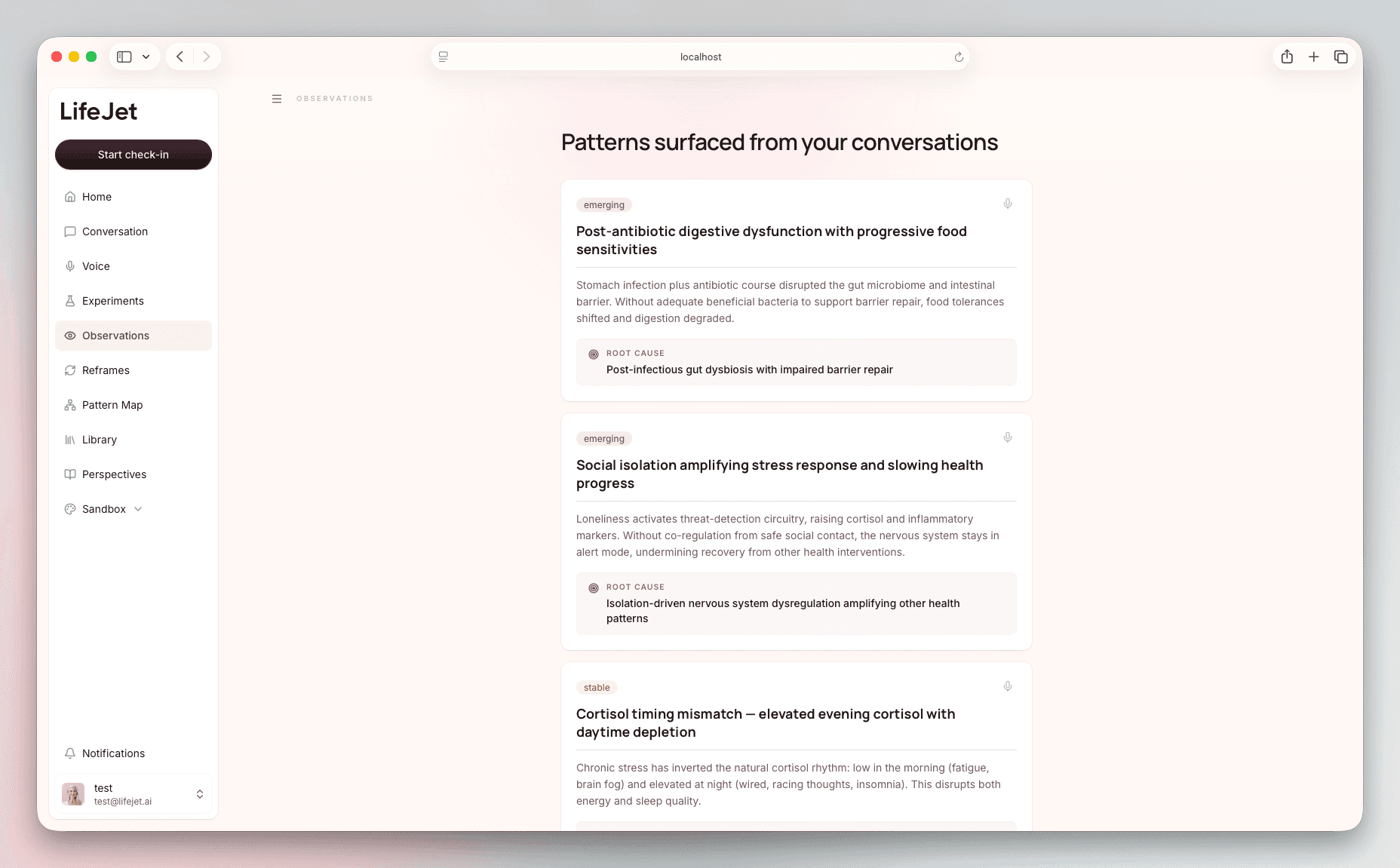

Persistent artifacts sit alongside the memory layer. Experiments carry the keystone action (the action itself, what to keep fixed, the tracking cue, the success threshold) and supersede prior versions, so the user's history of what they've tried reads as a single evolving line. Reframes are saveable identity-level shifts: better questions to hold, framed against the moment to reach for them. Observations are promoted patterns: the agent's working theory of the user's system after it has earned enough confidence to become user-facing.

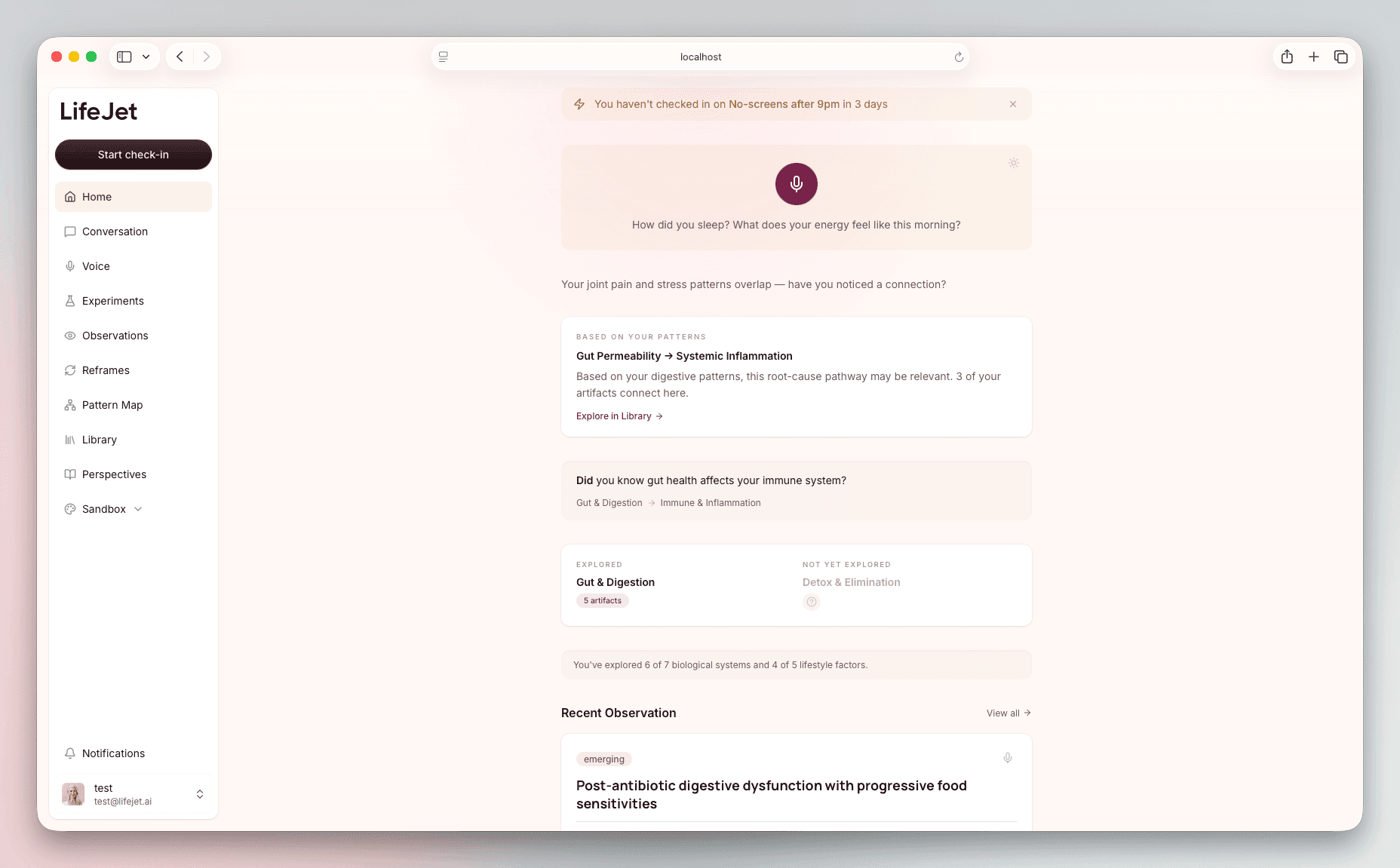

The product shots make that division visible. An experiment asks for one check-in. An observation explains a pattern and names the root-cause hypothesis. The pattern is a claim the system is willing to carry forward.

A companion that helps people open up.

Most consumer health products feel like a dashboard you have to read. Long supplement lists, biometric tiles, urgency-coded notifications, status badges that demand triage. The user is doing more work to use the product than the product is doing for them.

LifeJet runs the other direction. The user opens the app and starts a conversation, by chat or by voice, about bad sleep, an inflammation flare, a hard week. The conversation surface gives them almost nothing to manage. The persistent screens only step forward after the conversation has produced something durable.

Most of the playbook plays out as conversation; the screens are how the work persists.

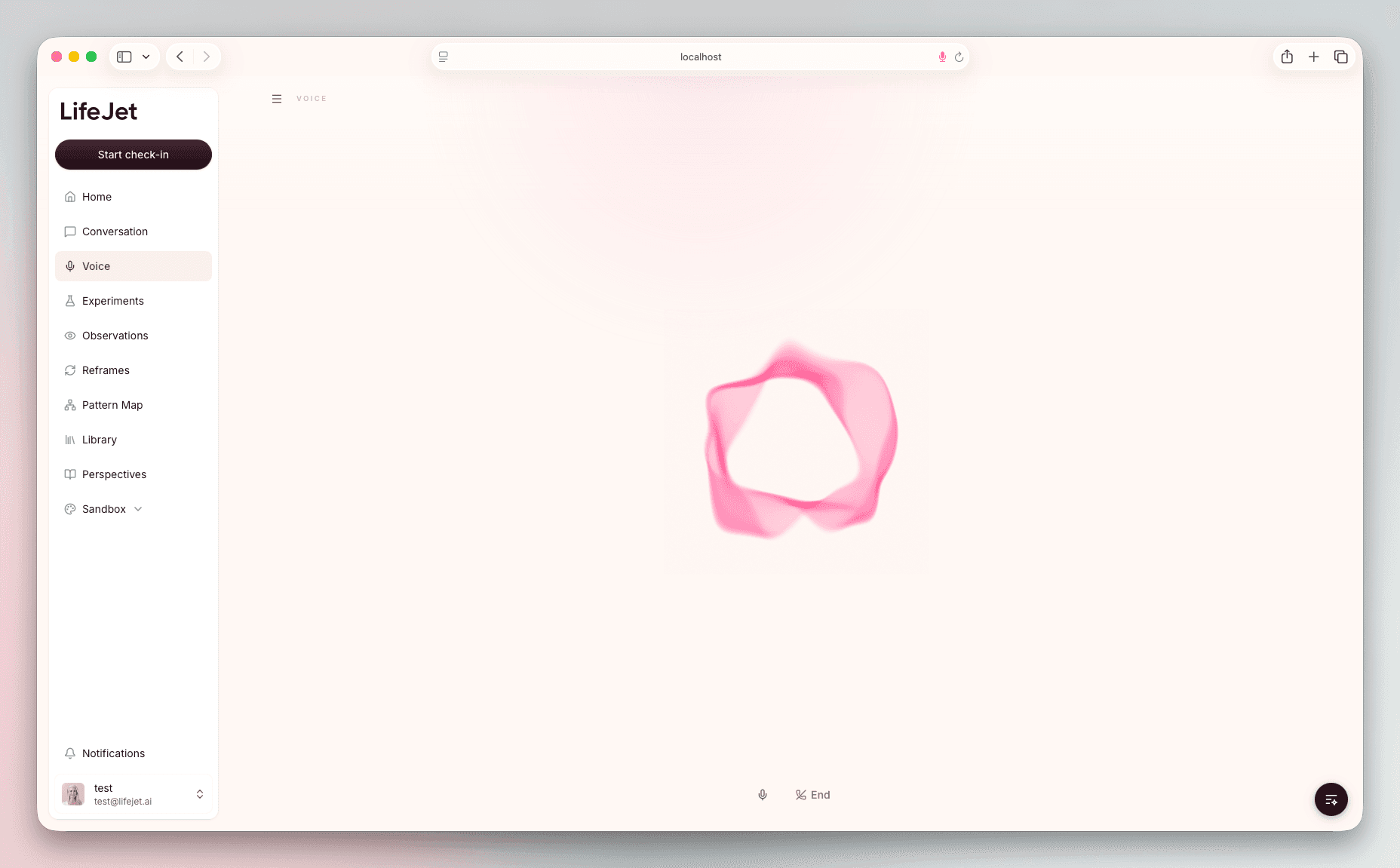

A voice that slows people down.

The voice is built to slow people down. The model speaks in two or three short sentences with deliberate pauses, soft articulation, slightly elongated vowels, slow enough that the listener has time to absorb each thing said. One question at a time. Silence is fine.

It mirrors before it analyses. It can sit through silence, talk a user through a breathing pattern, walk them through a visualisation, or stay quiet while they figure out what to say. When someone shares something hard, the model acknowledges it simply before doing anything else.

Same playbook as the text mode. Only the delivery overlay differs. Voice today is one of the modalities. It's also the medium this product is built for. As the underlying technology matures, the current interface architecture evolves into a fully voice-driven experience.

Experiment, Reframe, Observation.

Cards render only when the agent has produced something worth carrying forward. The Experiment Card carries the deliverable for the keystone action: a name, the action itself, what to keep fixed, the tracking cue, the success threshold, and a lifecycle state with a versioning chain. The Reframe Card saves a sharper question the user can hold onto. The Observation Card is the promoted version of a pattern: status, explanation, and root-cause hypothesis in one readable object.

The check-in is embedded where it belongs: inside the active experiment. The mirror, the system explanation, the cautions, and the closing truth all stay as conversational text or speech. Wrapping them in cards would make them feel clinical and packaged.

Home shows what you're trying.

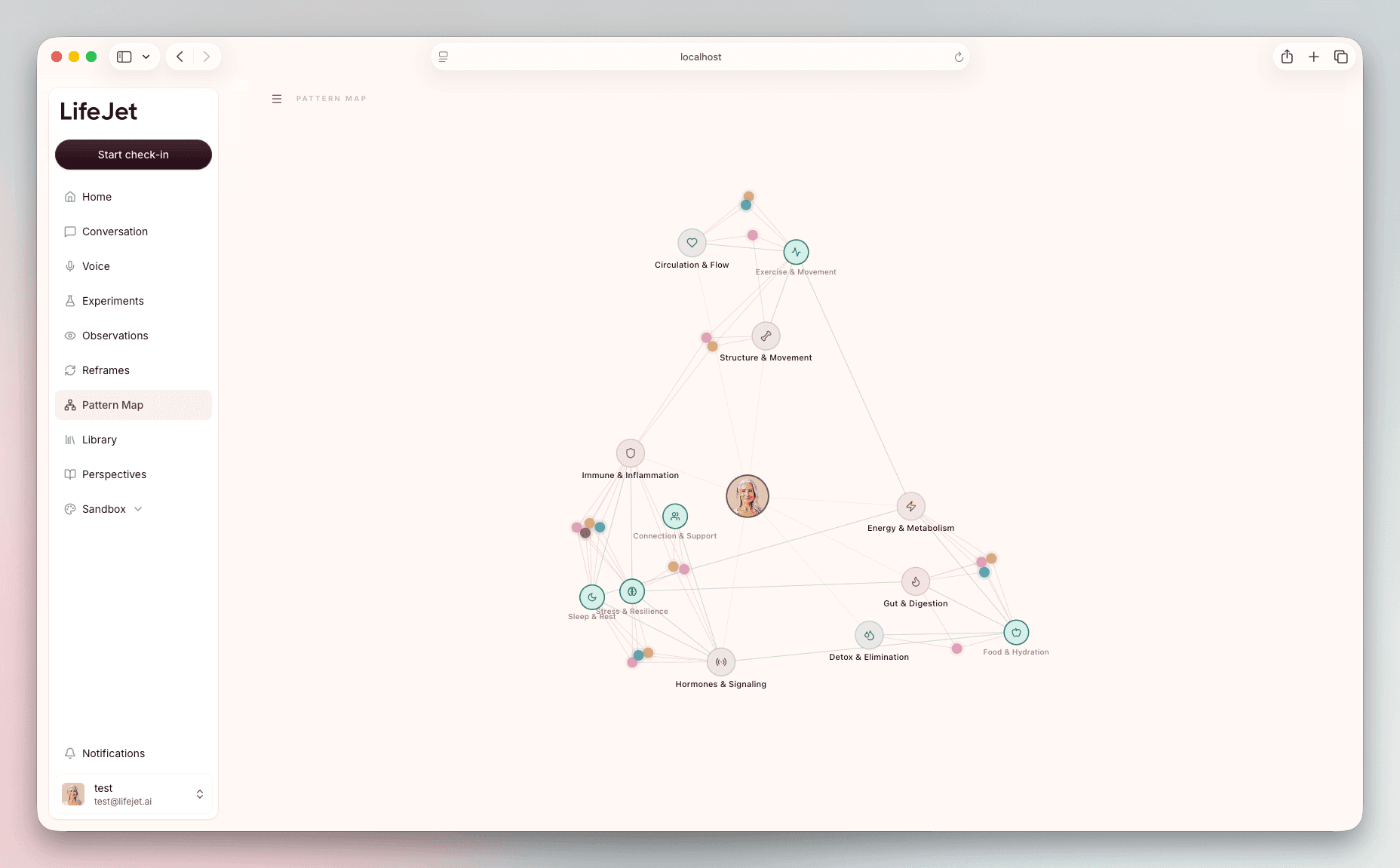

Outside the conversation, a quiet persistent layer mirrors the objects the agent has earned. Home can center the current experiment and next check-in, then widen into the relevant pattern, the systems already explored, and the most recent observation. /experiments lists active tests with lifecycle state and progress. /reframes is the saved-question library. /observations holds the patterns the agent has promoted to user-facing. /pattern-map turns the user's accumulating experiments, observations, and lifestyle factors into a connected model they can inspect.

The persistent layer reads the same artifacts the agent wrote.

Warm rose on layered shadow.

The visual system runs counter to the cool-blue-dashboard convention the rest of the category lives in. Material depth comes from inset shadows and shadow rings, so interactive surfaces feel like physical objects under the cursor. Rose is reserved for status (active experiment, current focus, save action) so the accent stays meaningful at every appearance. Typography carries hierarchy first.

The marketing site at lifejet.ai is built on the same component library, so a visitor arriving from outside lands on the same surface they'll work inside.

The decisions specific to LifeJet.

The agentic playbook and the product surface only work if a few engineering decisions hold up the rest. Most agentic products end up wiring two stores (one for the chat surface, one for the persistent layer) and spending the next three quarters keeping them in sync. Most try to infer artifact lifecycle after the fact, counting check-ins or thresholding on recency. Most ship voice as a second client, with its own state, its own writes, its own divergence.

I made three calls up front. Cards in the thread are rows in the database. The agent's lifecycle judgments are first-class schema fields, written at the same moment the card renders. Voice and chat write to the same artifact tables.

The rest of the stack is commodity by choice (Next.js, Vercel AI SDK on Sonnet 4, OpenAI gpt-realtime through LiveKit, Supabase, Mem0) so the build effort goes toward what's actually unique to LifeJet.

One artifact layer reads from both surfaces. A user's voice session and chat turn write to the same memory; the next session loads it back.

The card in the thread is the row in the database.

When an assistant turn finalizes, an extraction pipeline reads the streamed tool parts, types each card against its target table (Experiment, Reframe, or Pattern), and writes it through to Supabase. The agent never makes two decisions, one for the chat surface and one for the persistent layer. It renders a card; the card is the row; the row is what /experiments and /reframes load.

The agent's decisions ride with the tool call.

Most agentic products try to infer state after the fact: count check-ins, look at recency, threshold against a heuristic. LifeJet doesn't. Every deliverable tool call carries the agent's explicit decision: lifecycle state, whether to promote a pattern to user-facing, whether the new artifact supersedes a prior one (and if so, which). Those fields land directly in the schema. The system trusts the agent's judgment, and every artifact has a versioning chain the user can follow forward.

Voice and chat read from one artifact layer.

The voice agent runs in its own app on its own runtime: OpenAI's gpt-realtime through LiveKit Agents, with Deepgram Flux handling streaming transcription. When a voice session ends, the transcript hands off to a finalize endpoint on the web app that runs it through the same artifact extraction pipeline as a chat turn, and memory autosave fires the same way.

An experiment created during a voice call shows up on the home screen alongside experiments created in chat. A reframe a user saved while talking is loaded into the next text session as memory context. A pattern the agent promoted during a chat session shows up on /observations and informs the next voice call.

The user's relationship with LifeJet is one continuous thread.

What solo means here.

I designed and built it end to end. The agentic playbook, the tools, the artifact extraction pipeline, the repository, the memory schema, the persistent layer, the cards, the chat surface, the voice agent, the auth flow, the Supabase schema and policies, the design system, and the marketing site at lifejet.ai. Each piece had to fit cleanly with whatever I touched next.

What shipped.

LifeJet is live at lifejet.ai with 500 on the waitlist. The agentic playbook, the auto-extracted artifacts, the active-experiment check-ins, the saved reframes, the surfaced observations, the voice modality, and the shared artifact layer feeding the pattern map are all running in production. Every session opens with the relevant memory already loaded, and the next surface can show what the user is trying, what LifeJet has noticed, and how those pieces connect.

Praise

I implicitly trust David to make product and technology decisions that benefit the company. He's been instrumental in turning my rough vision into a real, working product. I don't second-guess a call he's made.

Mike MossCo-founder & CEO, LifeJetMM